https://spark.apache.org/docs/1.1.1/quick-start.html

操作系統:Ubuntu16.04;

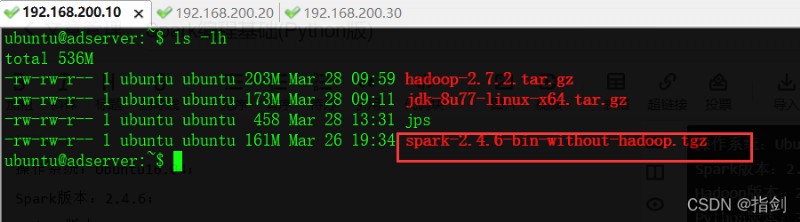

Spark版本:2.4.6;

Hadoop版本:2.7.2。

Python版本:3.5.。

點擊下載:spark-2.4.6-bin-without-hadoop.tgz

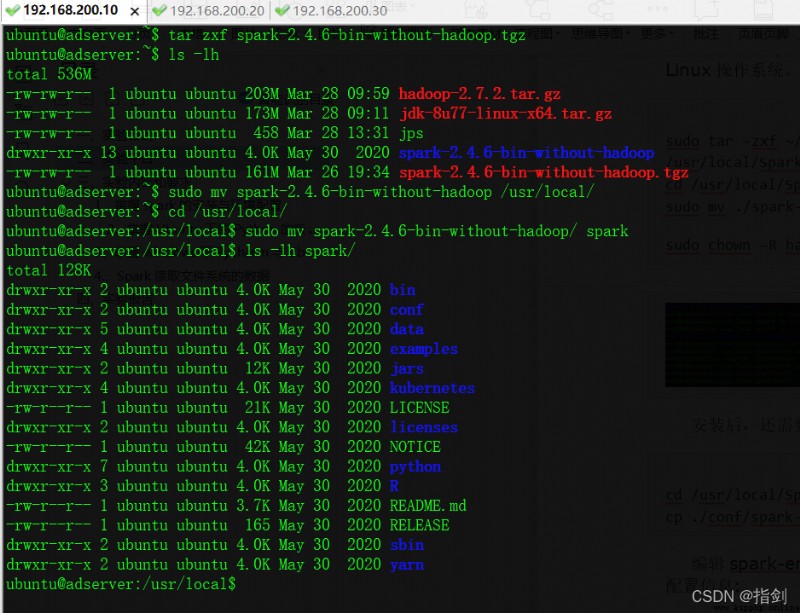

[email protected]:~$ tar zxf spark-2.4.6-bin-without-hadoop.tgz

[email protected]:~$ ls -lh

total 536M

-rw-rw-r-- 1 ubuntu ubuntu 203M Mar 28 09:59 hadoop-2.7.2.tar.gz

-rw-rw-r-- 1 ubuntu ubuntu 173M Mar 28 09:11 jdk-8u77-linux-x64.tar.gz

-rw-rw-r-- 1 ubuntu ubuntu 458 Mar 28 13:31 jps

drwxr-xr-x 13 ubuntu ubuntu 4.0K May 30 2020 spark-2.4.6-bin-without-hadoop

-rw-rw-r-- 1 ubuntu ubuntu 161M Mar 26 19:34 spark-2.4.6-bin-without-hadoop.tgz

[email protected]:~$ sudo mv spark-2.4.6-bin-without-hadoop /usr/local/

[email protected]:~$ cd /usr/local/

[email protected]:/usr/local$ sudo mv spark-2.4.6-bin-without-hadoop/ spark

[email protected]:/usr/local$ ls -lh spark/

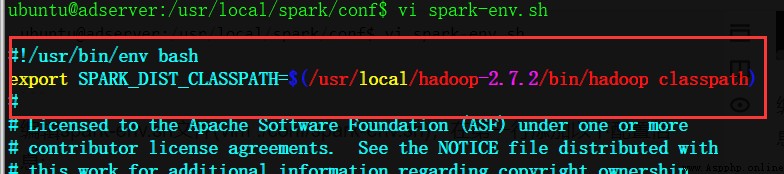

[email protected]:~$ cd /usr/local/spark/conf/

[email protected]:/usr/local/spark/conf$ pwd

/usr/local/spark/conf

[email protected]:/usr/local/spark/conf$ cp spark-env.sh.template spark-env.sh

[email protected]:/usr/local/spark/conf$ vi spark-env.sh

編輯spark-env.sh文件(vim ./conf/spark-env.sh),在第一行添加以下配置信息:

export SPARK_DIST_CLASSPATH=$(/usr/local/hadoop-2.7.2/bin/hadoop classpath)

有了上面的配置信息以後,Spark就可以把數據存儲到Hadoop分布式文件系統HDFS中,也可以從HDFS中讀取數據。如果沒有配置上面信息,Spark就只能讀寫本地數據,無法讀寫HDFS數據。 配置完成後就可以直接使用,不需要像Hadoop運行啟動命令。 通過運行Spark自帶的示例,驗證Spark是否安裝成功。

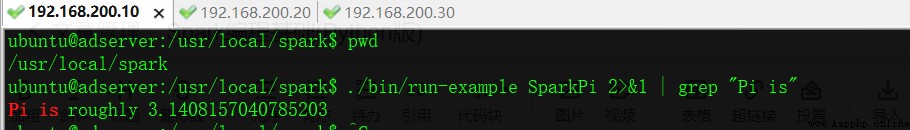

執行時會輸出非常多的運行信息,輸出結果不容易找到,可以通過 grep 命令進行過濾(命令中的 2>&1 可以將所有的信息都輸出到 stdout 中,否則由於輸出日志的性質,還是會輸出到屏幕中):

[email protected]:/usr/local/spark$ ./bin/run-example SparkPi 2>&1 | grep "Pi is"

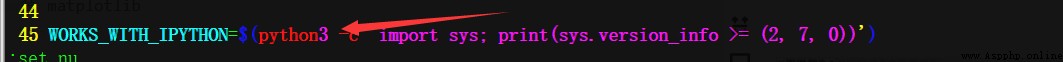

修改/usr/local/spark/bin/pyspark 文件內容

修改45行 python 為 python3

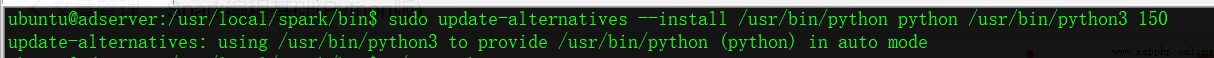

執行命令sudo update-alternatives --install /usr/bin/python python /usr/bin/python3 150

[email protected]:/usr/local/spark/bin$ sudo update-alternatives --install /usr/bin/python python /usr/bin/python3 150

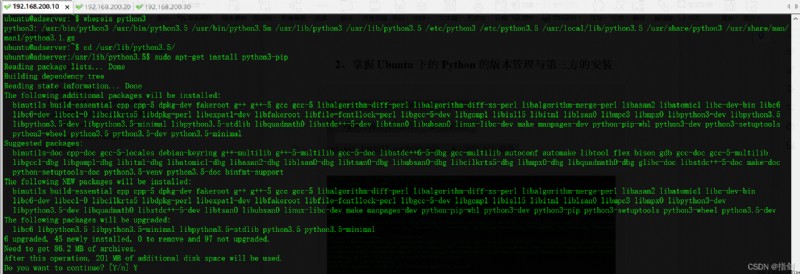

whereis python3 # 確定Python3目錄

cd /usr/lib/python3.5 # 切換目錄

sudo apt-get install python3-pip # 安裝 pip 軟件

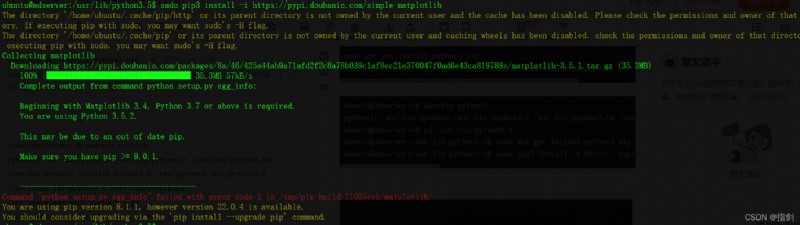

sudo pip3 install -i https://pypi.doubanio.com/simple matplotlib # 安裝 matplotlib

[email protected]:~$ whereis python3

python3: /usr/bin/python3 /usr/bin/python3.5 /usr/bin/python3.5m /usr/lib/python3 /usr/lib/python3.5 /etc/python3 /etc/python3.5 /usr/local/lib/python3.5 /usr/share/python3 /usr/share/man/man1/python3.1.gz

[email protected]:~$ cd /usr/lib/python3.5/

[email protected]:/usr/lib/python3.5$ sudo apt-get install python3-pip

[email protected]:/usr/lib/python3.5$ sudo pip3 install -i https://pypi.doubanio.com/simple matplotlib

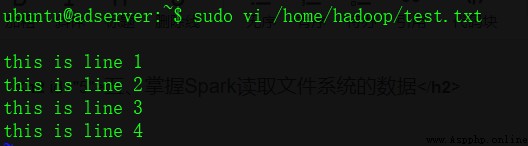

首先創建測試文件

$ vi /home/hadoop/test.txt

this is line 1

this is line 2

this is line 3

this is line 4

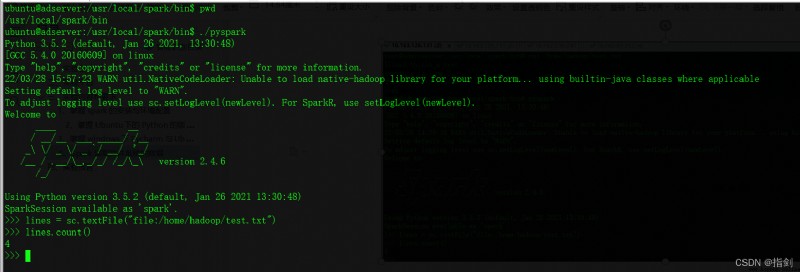

[email protected]:/usr/local/spark/bin$ pwd

/usr/local/spark/bin

[email protected]:/usr/local/spark/bin$ ./pyspark

Python 3.5.2 (default, Jan 26 2021, 13:30:48)

[GCC 5.4.0 20160609] on linux

Type "help", "copyright", "credits" or "license" for more information.

22/03/28 15:57:23 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/ /__ / .__/\_,_/_/ /_/\_\ version 2.4.6 /_/ Using Python version 3.5.2 (default, Jan 26 2021 13:30:48) SparkSession available as 'spark'.

>>> lines = sc.textFile("file:/home/hadoop/test.txt")

>>> lines.count()

4

>>>

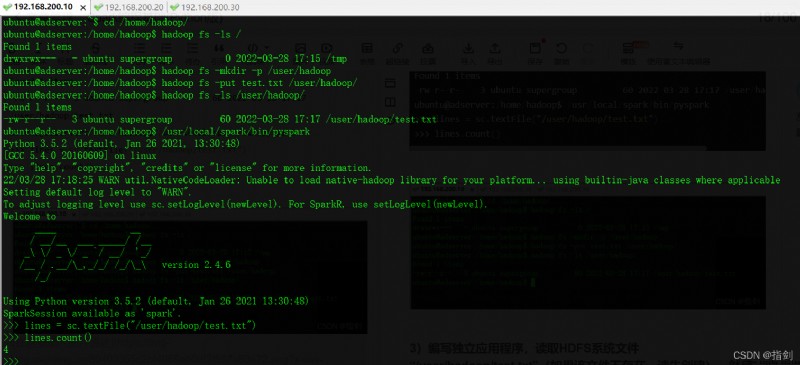

[email protected]:~$ cd /home/hadoop/

[email protected]:/home/hadoop$ hadoop fs -ls /

Found 1 items

drwxrwx--- - ubuntu supergroup 0 2022-03-28 17:15 /tmp

[email protected]:/home/hadoop$ hadoop fs -mkdir -p /user/hadoop

[email protected]:/home/hadoop$ hadoop fs -put test.txt /user/hadoop/

[email protected]:/home/hadoop$ hadoop fs -ls /user/hadoop/

Found 1 items

-rw-r--r-- 3 ubuntu supergroup 60 2022-03-28 17:17 /user/hadoop/test.txt

[email protected]:/home/hadoop$ /usr/local/spark/bin/pyspark

>>> lines = sc.textFile("/user/hadoop/test.txt")

>>> lines.count()

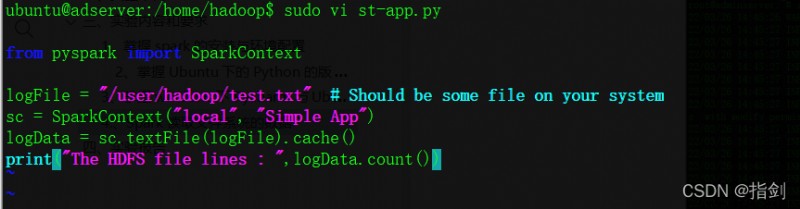

[email protected]:/home/hadoop$ sudo vi st-app.py

from pyspark import SparkContext

logFile = "/user/hadoop/test.txt" # Should be some file on your system

sc = SparkContext("local", "Simple App")

logData = sc.textFile(logFile).cache()

print("The HDFS file lines : ",logData.count())

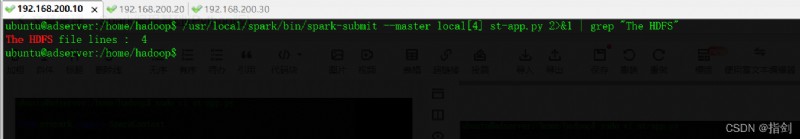

[email protected]:/home/hadoop$ /usr/local/spark/bin/spark-submit --master local[4] st-app.py 2>&1 | grep "The HDFS"