# 需求 下載前十頁的圖片

# 第一頁:https://sc.chinaz.com/tupian/qinglvtupian.html

# 第二頁:https://sc.chinaz.com/tupian/qinglvtupian_2.html

import urllib.request

from lxml import etree

def creat_request(page):

if(page == 1):

url = 'https://sc.chinaz.com/tupian/qinglvtupian.html'

else:

url = 'https://sc.chinaz.com/tupian/qinglvtupian_' + str(page) + '.html'

headers = {

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/102.0.5005.124 Safari/537.36 Edg/102.0.1245.44'

}

request = urllib.request.Request(url=url,headers=headers)

return request

def get_content(request):

response = urllib.request.urlopen(request)

content = response.read().decode('utf-8')

return content

def down_load(content):

# 下載圖片

tree = etree.HTML(content)

name_list = tree.xpath("//div[@id='container']//a/img/@alt")

# 一般涉及圖片的網站會有懶加載

src_list = tree.xpath("//div[@id='container']//a/img/@src")

# print(len(name_list),len(src_list))

for i in range(len(name_list)):

name = name_list[i]

src = src_list[i]

url = 'https:' + src

url = url.replace('_s','') # 將鏈接中的 _s 刪除可以下載到高清圖片

urllib.request.urlretrieve(url = url,filename='./file/' + name + '.jpg')

if __name__ == '__main__':

start_page = int(input('請輸入起始頁碼'))

end_page = int(input('請輸入結束頁碼'))

for page in range (start_page,end_page+1):

# (1) 請求對象的定制

request = creat_request(page)

# (2) 獲取網頁源碼

content = get_content(request)

# (3) 下載

down_load(content)

1.安裝JsonPath

pip install jsonpath -i https://pypi.douban.com/simple

2.解析json案例

json數據

{

"store": {

"book": [

{

"category": "修真",

"author": "六道",

"title": "壞蛋是怎樣煉成的",

"price": 8.95

},

{

"category": "修真",

"author": "我吃西紅柿",

"title": "吞噬星空",

"price": 9.96

},

{

"category": "修真",

"author": "唐家三少",

"title": "斗羅大陸",

"price": 9.45

},

{

"category": "修真",

"author": "南派三叔",

"title": "新城變",

"price": 8.72

}

],

"bicycle": {

"color": "黑色",

"price": 19.95

}

}

}

(1)獲取書店所有作者

import json

import jsonpath

obj = json.load(open('file/jsonpath.json','r',encoding='utf-8'))

# 書店所有作者 book[*]所有書 book[1]第一本書

author_list = jsonpath.jsonpath(obj,'$.store.book[*].author')

print(author_list)

(2)獲取所有的作者

# 所有的作者

author_list = jsonpath.jsonpath(obj,'$..author')

print(author_list)

(3)store下面的所有元素

# store下面的所有元素

tag_list = jsonpath.jsonpath(obj,'$.store.*')

print(tag_list)

(4)store下面的所有錢

# store下面的所有錢

price_list = jsonpath.jsonpath(obj,'$.store..price')

print(price_list)

(5)第三本書

# 第三本書

book = jsonpath.jsonpath(obj,'$..book[2]')

print(book)

(6)最後一本書

# 最後一本書

book = jsonpath.jsonpath(obj,'$..book[(@.length - 1)]')

print(book)

(7)前兩本書

# 前兩本書

book_list = jsonpath.jsonpath(obj,'$..book[0,1]') #等價於下一種寫法

book_list = jsonpath.jsonpath(obj,'$..book[:2]')

print(book_list)

(8)過濾出版本號 條件過濾需要在圓括號的前面添加問號

# 過濾出版本號 條件過濾需要在圓括號的前面添加問號

book_list = jsonpath.jsonpath(obj,'$..book[?(@.isbn)]')

print(book_list)

(9)那本書超過9元

# 那本書超過9元

book_list = jsonpath.jsonpath(obj,'$..book[?(@.price>9.8)]')

print(book_list)

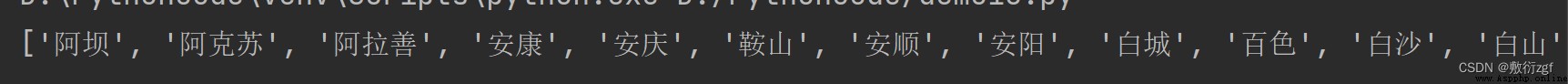

import urllib.request

url = 'http://dianying.taobao.com/cityAction.json?activityId&_ksTS=1656042784573_63&jsoncallback=jsonp64&action=cityAction&n_s=new&event_submit_doGetAllRegion=true'

headers ={

# ':authority': ' dianying.taobao.com',

# ':method': ' GET',

# ':path': ' /cityAction.json?activityId&_ksTS=1656042784573_63&jsoncallback=jsonp64&action=cityAction&n_s=new&event_submit_doGetAllRegion=true',

# ':scheme': ' https',

'accept':'*/*',

# 'accept-encoding': ' gzip, deflate, br',

'accept-language':'zh-CN,zh;q=0.9,en;q=0.8,en-GB;q=0.7,en-US;q=0.6',

'cookie':'cna=zIsvG8QofGgCAXAc0HQF5jMC;ariaDefaultTheme=undefined;xlly_s=1;t=9ac1f71719420207d1f87d27eb676a4c;cookie2=1780e3cc3bb6e7514cd141e9f837bf83;v=0;_tb_token_=fb13e3ee13e77;_m_h5_tk=e38bb4bac8606d14f4e3e90d0499f94a_1656050157762;_m_h5_tk_enc=76f353efff1883eec471912a42ecc783;tfstk=cfOCBVmvikqQn3HzTegZLGZ2rC15Z9ic58j9RcpoM5T02MTCixVVccfNfkfPyN1..;l=eBMAoWzqL6O1ZKDhBOfwhurza77OGIRAguPzaNbMiOCP_gCp5jA1W6b5MMT9CnGVhsT2R3uKagAwBeYBqI2jjqjqnZ2bjbkmn;isg=BMrKoAG5nLBFjxABGbp50JlLG7Bsu04VAQVWEFQDG52oB2rBPEsiJUJxF3Pb98at',

'referer':'https://www.taobao.com/',

'sec-ch-ua': ' "Not A;Brand";v="99", "Chromium";v="102", "Microsoft Edge";v="102"',

'sec-ch-ua-mobile': '?0',

'sec-ch-ua-platform': '"Windows"',

'sec-fetch-dest': 'script',

'sec-fetch-mode': 'no-cors',

'sec-fetch-site': 'same-site',

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/102.0.5005.124 Safari/537.36 Edg/102.0.1245.44',

}

request = urllib.request.Request(url=url,headers=headers)

response = urllib.request.urlopen(request)

content = response.read().decode('utf-8')

content = content.split('(')[1].split(')')[0]

with open('./file/jsonpath解析淘票票.json','w',encoding='utf-8')as fp:

fp.write(content)

import json

import jsonpath

obj = json.load(open('./file/jsonpath解析淘票票.json','r',encoding='utf-8'))

cith_list = jsonpath.jsonpath(obj,'$..regionName')

print(cith_list)

簡稱:bs4

是啥:BeautifulSoup和lxml一樣,是一個html解析器,主要功能是解析和提取數據

優點:接口設計人性化,使用方便

缺點:效率沒有lxml高

【使用步驟】

pip install bs4 -i https://pypi.douban.com/simplefrom bs4 import BeautifulSoupsoup = BeautifulSoup(response.read().decode(),'lxml')soup = BeautifulSoup(open('1.html'),'lxml')案例:

# 1.通過解析本地文件,掌握bs4的基礎語法

# 默認打開文件的編碼格式是gbk,所以需要打開文件時指定編碼格式

soup = BeautifulSoup(open('file/bs4解析本地文件.html',encoding='utf-8'),'lxml')

# 1.根據標簽名查找結點

print(soup.a) # 找到第一個符合條件的數據

print(soup.a.attrs) # 獲取標簽的屬性和屬性值

# bs4的一些函數

# (1)find

# 返回的是第一個符合條件的數據

print(soup.find('a'))

# 根據title的值找到對應的標簽對象

print(soup.find('a',title='a2'))

# 根據class的值找到對應的標簽對象 class下需要添加下劃線

print(soup.find('a',class_ = 'a1'))

# (2)find_all 返回的是一個列表,並且返回了所有的a標簽

print(soup.find_all('a'))

# 若想獲取多個標簽的數據,需要在find_all的參數中添加列表元素

print(soup.find_all(['a','span']))

# limitc查找前幾個數據

print(soup.find_all('li',limit=2))

# (3)select (推薦)

# select方法返回的是一個列表,並且返回多個數據

print(soup.select('a'))

# 可以通過.代表class,把該操作叫做類選擇器

print(soup.select('.a1'))

print(soup.select('#l1'))

# 屬性選擇器 查找到li標簽中有id的標簽

print(soup.select('li[id]'))

# 查找到li標簽中id = l2 的標簽

print(soup.select('li[id="l2"]'))

# 層級選擇器

# 後代選擇器 找到div下面的li

print(soup.select('div li'))

# 子代選擇器 某標簽的第一級子標簽 在bs4中加不加空格都可以

print(soup.select('div > ul > li'))

# 找到a和li標簽的所有對象

print(soup.select('a,li'))

節點信息 獲取節點內容

obj = soup.select('#d1')[0]

# 如果標簽對象中只有內容,則string 和get_text()都可以獲取到內容

# 如果標簽對象中除了內容還有標簽,則string獲取不到數據,而get_text()可以獲取數據

# 一般情況下,推薦使用get_text()

print(obj.string)

print(obj.get_text())

# 節點的屬性

# 為啥要加[0],由於select返回的是列表。列表是沒有select屬性的

obj = soup.select('#p1')[0]

# name是標簽的名字

print(obj.name)

# 將屬性值作為一個字典返回

print(obj.attrs)

# 獲取節點的屬性

obj = soup.select('#p1')[0]

print(obj.attrs.get('class'))

print(obj.get('class'))

print(obj['class'])

XPath插件打開快捷鍵:ctrl+shift+X

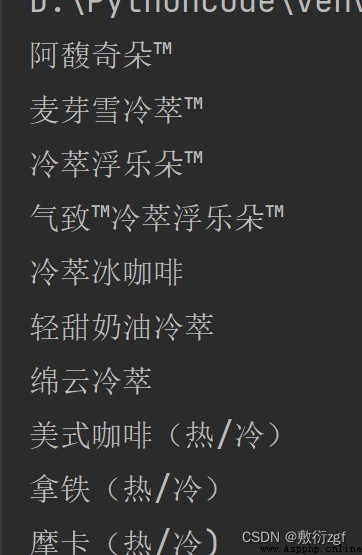

import urllib.request

url = 'https://www.starbucks.com.cn/menu/'

response = urllib.request.urlopen(url)

content = response.read().decode('utf-8')

from bs4 import BeautifulSoup

soup = BeautifulSoup(content,'lxml')

# //ul[@class='grid padded-3 product']//strong/text()

name_list = soup.select('ul[class = "grid padded-3 product"] strong')

for name in name_list :

print(name.get_text())